Trustpilot has become almost synonymous with online reviews. For many consumers and businesses, it is the default place to check whether a product or company is trustworthy. But as the software and AI ecosystem has matured, relying on a single review platform is no longer enough.

Modern buyers are more sophisticated. Founders, marketers, SaaS teams, and enterprise decision-makers now understand that different platforms reveal different layers of truth. Some are stronger for B2B software. Others surface early-stage AI tools. A few provide cleaner credibility signals than large, crowded marketplaces.

As a result, professionals increasingly build a multi-source validation workflow instead of depending solely on Trustpilot. The platforms below represent widely used alternatives that help paint a more complete and reliable picture when evaluating software, AI products, and digital services.

Why Professionals Are Looking Beyond Trustpilot

Trustpilot still plays an important role in the online reputation ecosystem. It offers broad consumer sentiment and can quickly reveal whether a brand has major customer satisfaction issues. However, its generalist nature also creates limitations for technical buyers and software-focused teams.

Today’s buyers are often looking for more specific signals. They want to understand how a tool performs in real business environments, whether support teams respond quickly, how integrations behave under load, and whether pricing scales predictably. These details rarely surface clearly on broad consumer review platforms.

Another major shift is the rise of AI-native products. Many newer tools launch and gain traction within specialized communities long before they accumulate large volumes of mainstream consumer reviews. Teams that rely only on Trustpilot risk missing important early signals.

Because of these changes, experienced buyers increasingly triangulate information across multiple review ecosystems. Each platform contributes a different perspective, and together they create a far more reliable decision framework.

G2: The Enterprise Validation Layer

Among Trustpilot alternatives, G2 has emerged as one of the most influential platforms for business software evaluation. It is widely used by procurement teams, IT leaders, and SaaS buyers who need structured, high-volume user feedback.

What distinguishes G2 is the depth of its review architecture. Instead of simple star ratings, the platform captures detailed user sentiment across usability, implementation complexity, support quality, and expected return on investment. For organizations running formal vendor selection processes, this granularity is extremely valuable.

G2 also provides visual comparison grids and category rankings that help teams quickly understand competitive positioning. When multiple vendors appear similar on paper, these structured insights often help clarify the decision.

For many professional buyers, G2 functions as the credibility checkpoint. Tools may be discovered elsewhere, but serious validation frequently happens here before contracts are signed or pilots begin.

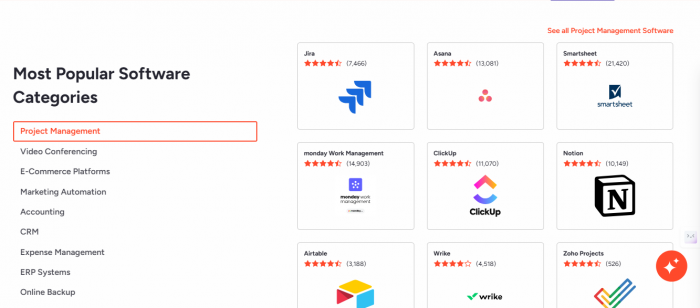

Capterra: The SMB Research Workhorse

Capterra continues to play a foundational role, particularly for small and mid-sized businesses evaluating operational software. Its interface is designed for accessibility, making it easy for non-technical buyers to begin comparing tools.

One of Capterra’s strengths is its filtering flexibility. Users can quickly narrow options by pricing tier, deployment model, company size, and core feature requirements. This makes it especially useful during the early stages of the buying journey when teams are still mapping available options.

While it may not always provide the deepest technical insight, Capterra excels at helping buyers build an initial shortlist. Many teams begin their research here before moving into more specialized platforms for deeper validation.

As AI continues to integrate into mainstream business software, Capterra’s role as an entry point remains highly relevant.

TechSuggest: A Curated Lens on Modern Software

As the software landscape becomes more crowded, curated discovery platforms are gaining importance. TechSuggest has been steadily building traction among users who want structured visibility into modern software categories without navigating overwhelming directories.

The platform emphasizes organized product listings and category clarity. This approach helps buyers quickly understand what a tool does and where it fits within the broader ecosystem. For teams exploring newer AI solutions, this guided structure can significantly reduce early research friction.

Many professionals use TechSuggest during the exploration phase, particularly when they are scanning unfamiliar categories. It often serves as a useful bridge between early discovery and deeper marketplace validation.

In fast-moving AI segments where new vendors appear frequently, curated environments like this are becoming increasingly valuable.

GeniusFirms: Fast Signal for AI and Tech Buyers

GeniusFirms has been appearing more frequently in workflows focused on AI and emerging technology evaluation. Its structure prioritizes clarity and straightforward company profiling, which makes quick credibility checks easier.

Instead of overwhelming users with excessive data density, the platform allows buyers to rapidly assess whether a product or vendor deserves further investigation. This is particularly useful in the current AI boom, where many new tools launch with limited historical feedback.

Teams often use GeniusFirms as an early filtering layer before committing time to deeper research. It helps answer the first important question: is this product worth serious consideration?

As the AI ecosystem continues expanding, lightweight credibility platforms like this are becoming an increasingly common part of the modern research stack.

AppCritica: Clean Discovery Without Marketplace Noise

AppCritica is gaining attention among teams that prefer a cleaner, more focused software discovery experience. In an era where many review directories feel overcrowded, platforms that emphasize clarity and signal are becoming more appealing.

The value of AppCritica lies in how quickly users can grasp product positioning. Instead of digging through dense review clusters, buyers can scan structured listings and identify which tools align with their needs.

This is particularly helpful during the shortlist-building phase. When evaluating multiple AI or SaaS products, early clarity reduces decision fatigue and speeds up research workflows.

As review fatigue becomes more common among buyers, platforms that reduce noise while preserving useful insight are likely to see growing adoption.

Software Advice: Guided Selection for Overwhelmed Buyers

Software Advice takes a slightly different approach from traditional review directories. Rather than relying purely on self-directed browsing, it often provides guided recommendations based on business requirements.

For teams entering unfamiliar software categories, this consultative layer can be extremely helpful. It reduces the cognitive burden of evaluating dozens of potential vendors and provides a more structured path toward shortlisting.

Organizations formalizing their procurement processes for the first time often find this model particularly useful. It brings additional structure to what can otherwise feel like an overwhelming evaluation process.

As AI capabilities spread across more business functions, guided recommendation platforms may become even more relevant for non-technical decision-makers.

Product Hunt: The Early Warning Radar

Product Hunt occupies a unique position in the research ecosystem. It is not a traditional review platform, but it remains one of the most effective ways to spot emerging AI tools early.

Many startups launch publicly on Product Hunt before appearing in formal marketplaces. For operators who want to stay ahead of the curve, monitoring launch activity here provides valuable directional signals.

The platform’s community engagement also offers insight into early product traction. Strong launch momentum often indicates rising market interest, although it should never be treated as final validation.

Experienced buyers use Product Hunt as a discovery radar, then follow up with deeper due diligence on more structured review platforms.

AlternativeTo: Competitive Landscape Mapping

AlternativeTo becomes particularly valuable once buyers have a known reference point. If a team is currently using one tool and wants to explore substitutes, this platform makes competitive discovery fast and intuitive.

Its community-driven model surfaces adjacent products that may not appear in standard category searches. This is especially useful during vendor replacement discussions or when exploring backup solutions.

In the rapidly evolving AI landscape, where feature overlap between tools is common, AlternativeTo helps teams quickly visualize the broader competitive field.

For many buyers, it plays a key role during the comparison and switching phase of the decision journey.

GetApp: Structured Business Software Comparison

GetApp, part of the Gartner Digital Markets family, provides another structured environment for business software evaluation. It combines user reviews with category filtering and product comparison tools.

Many small and mid-sized businesses include GetApp in their cross-check process alongside Capterra. The platform helps reinforce confidence when multiple sources align on product strengths and weaknesses.

Its business-focused orientation makes it particularly relevant for operational software decisions, including CRM, marketing automation, and productivity tools that increasingly incorporate AI capabilities.

SourceForge: Developer and Technical Perspective

SourceForge brings a slightly more technical lens to software discovery. Originally known for its open-source roots, it has evolved into a broader software comparison environment.

For teams evaluating developer tools, infrastructure software, or AI engineering platforms, SourceForge can provide useful additional context. It often surfaces feedback from more technically inclined users.

In complex AI workflows where engineering considerations matter, having at least one technically oriented review source can improve decision quality.

PeerSpot: Enterprise Infrastructure Insight

PeerSpot focuses heavily on enterprise IT decision-making, including security, data platforms, and infrastructure software. Large organizations often consult it during formal procurement cycles.

The platform emphasizes detailed enterprise user feedback, which can be particularly valuable for high-stakes technology investments. While not always the first stop for general SaaS buyers, it plays an important role in large-scale IT evaluations.

As AI infrastructure spending continues to grow globally, enterprise-focused review ecosystems like PeerSpot are becoming increasingly relevant.

Reddit: The Unfiltered Reality Check

Although Reddit is not a formal review platform, it remains one of the most candid sources of user sentiment available online. Technical communities and AI-focused forums frequently surface edge cases that polished reviews miss.

Experienced buyers treat Reddit carefully. It works best as a qualitative cross-check rather than a primary decision source. However, when patterns repeat across multiple independent threads, the signal can be extremely valuable.

Reddit is particularly good at exposing real-world friction points such as unexpected pricing behavior, integration challenges, and support responsiveness issues.

When combined with structured review platforms, it adds an important layer of ground truth.

How Smart Teams Replace Single-Platform Thinking

The biggest shift in modern software buying is methodological. High-performing teams no longer ask which single review site they should trust. Instead, they build layered validation workflows.

A typical professional research process now looks like this:

- Discovery begins on curated platforms and launch communities.

- Credibility is validated on structured review marketplaces.

- Reputation signals are checked across broader sentiment platforms.

- Alternatives are mapped to understand the competitive landscape.

- Finalists are tested through controlled pilots.

This multi-angle approach dramatically reduces the risk of expensive software mistakes.

Final Takeaway

Trustpilot remains useful, especially for broad brand sentiment. But in the fast-moving world of SaaS and AI, relying on any single review platform is increasingly risky.

The most effective buyers in 2026 understand that truth in software evaluation is distributed. It emerges from patterns across multiple credible sources, not from one dominant marketplace.

Teams that adopt this mindset consistently make better technology decisions. They avoid costly migrations, reduce vendor regret, and build more resilient software stacks.

In the age of AI acceleration, that discipline is no longer optional. It is a competitive advantage that compounds over time.

Post Comments

Be the first to post comment!