Banana provides autoscaling GPUs for high-throughput inference. It scales GPUs up and down automatically to maintain low costs and high performance. Users deploy machine learning models via containers with init and inference functions. The platform load balances requests across endpoints. Observability tools monitor request traffic, latency, and errors in real-time.

Simplified AI infrastructure setup for developers

Made GPU deployment accessible without deep system knowledge

Had a supportive community prior to migration

Offered easy integration via SDKs

Provided cloud-based scalable AI services

always-on VM options lack autoscaling and cost more

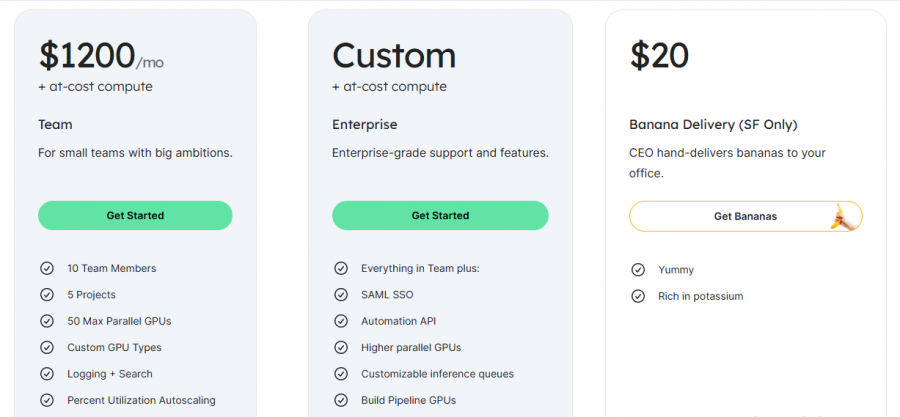

*Price last updated on Mar 3, 2026. Visit banana.dev's pricing page for the latest pricing.